My run-tracking app, RaceRunner, has features focused on racing and training for races. One of these features is alternate methods of ending runs. Here is an example.

The typical way to stop a run in a run-tracking app is to tap a button. RaceRunner supports this. But because of the physical exertion involved in running a race, a runner is sometimes in no condition to unlock an iPhone and tap a button at the end of a race. Even unlocking can be tricky because sweat often prevents TouchID from working, so instead the passcode must be tapped. So RaceRunner supports two alternative ways of ending a run. First, a run can stop automatically after a certain distance. This is great for time trials or if the runner does not trust the race organizers’ distance measurement. (A time trial involves running a certain distance, typically a race distance, as fast as possible.) Second, a spectator can use RaceRunner to stop the runner’s run. Both of these alternate means of stopping have problems. The certain-distance method may result in a recorded time that differs from actual time. The spectator method requires a cooperative spectator with an iPhone. So I implemented a third method: Siri.

Having just released a new version of RaceRunner with Siri support, I thought I’d share some learnings and pedagogic resources for other developers interested in implementing Siri support.

Implementation

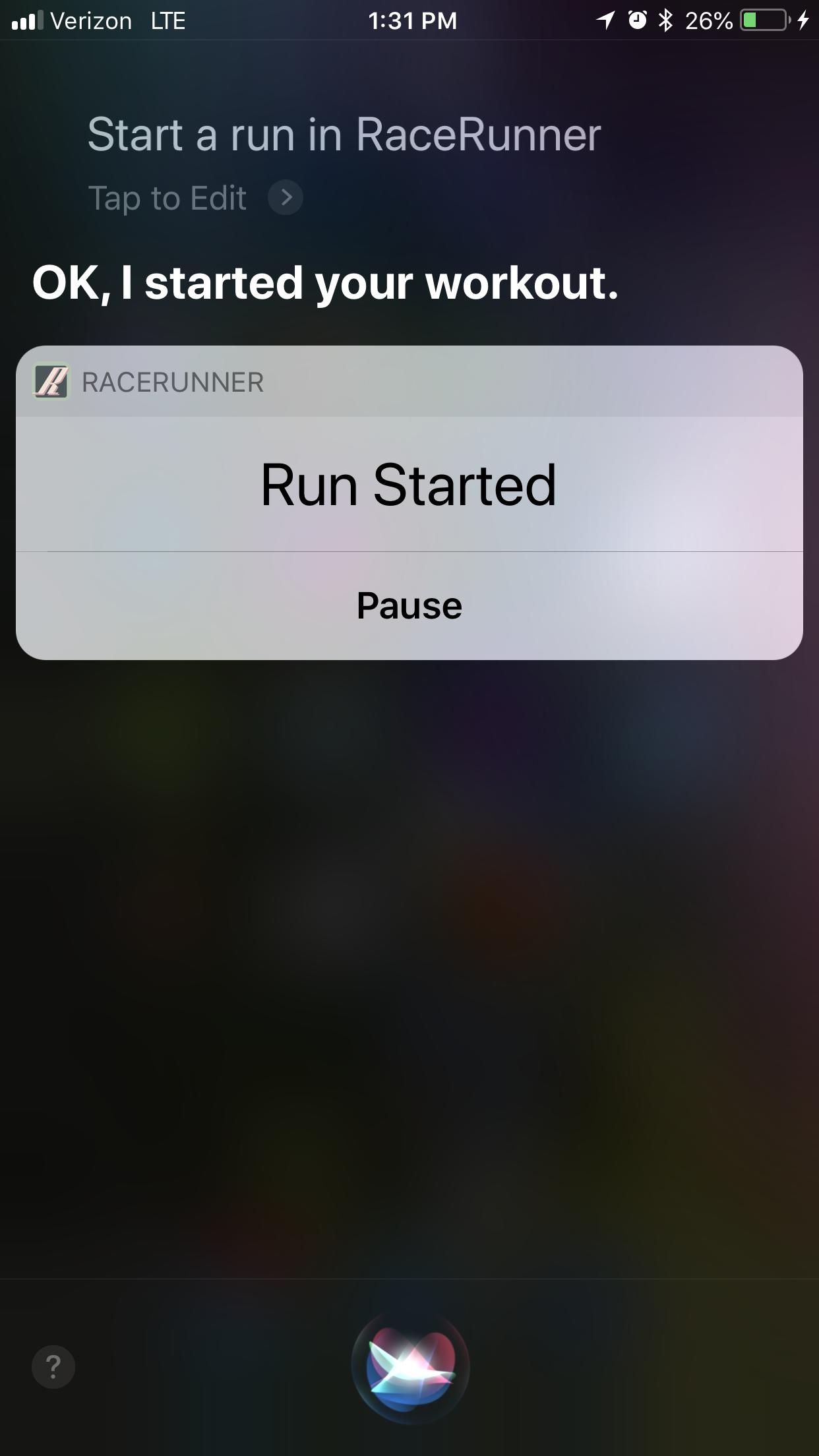

RaceRunner Workout Started with Siri

This blog post is not a tutorial on Siri support. That tutorial will drop after I grok iOS 12’s Siri enhancements. Instead, I’ll outline here the steps for implementing Siri support as it currently exists and point you to some pedagogic resources.

The steps are:

0. Decide the appropriate type of intent domain, if any, to implement. “Intent domain” is Apple jargon that means “domain of activity for which Siri currently supports third-party app integration”. RaceRunner uses the workouts intent domain. Other possibilities are messaging, lists and notes, payments, VoIP calling, media, visual codes, photos, ride booking, car commands, CarPlay, and restaurant reservations.

1. The intent domains contain domain-specific intents. Decide which are appropriate to implement. The workout intent domain includes intents for starting, pausing, resuming, ending, and canceling a workout. I decided to implement all except cancelling, which RaceRunner does not support. As an aside, I used alternate spellings of “cancel(l)ing” in the preceding two sentences as a protest against English orthography.

2. Create an intents extension in your app.

3. Configure the extension’s Info.plist to support the appropriate intents for your app. Here is RaceRunner’s.

4. Enhance the boilerplate file IntentsHandler.swift with code for the intents you intend to support. Here is RaceRunner’s implementation, showing the four intents mentioned above.

5. In AppDelegate.swift, implement a function to handle supported intents. By “handle intent”, I mean “update the appropriate model in the appropriate way”. For example, when this function in RaceRunner receives a stop-workout intent, AppDelegate calls the stop() function of RunModel, the run model. Here is RaceRunner’s AppDelegate.swift.

A clarification. The purpose of AppDelegate is to “ensure your app interacts properly with the system and with other apps [, giving] you a chance to respond to important changes.” The details of another type, for example RunModel, are outside the scope of this purpose. Direct interaction with RunModel by AppDelegate would therefore violate the single-responsibility principle. So RaceRunner’s AppDelegate doesn’t directly interact with RunModel. Instead, AppDelegate passes all intents to another type that does know about RunModel and calls the appropriate RunModel function for the four supported intents.

Pedagogic Resources

SiriKit’s learning curve is steep, in part because of terminology like “intent domain”. But I found that, after consuming the following resources, the framework made sense to me, and I was ready to get coding.

SiriKit Developer Documentation

Hey Siri, How Do I Get Started?

Adopting SiriKit changed significantly in iOS 11. A pre-iOS 11 tutorial took me down a rabbit hole until I realized that the tutorial was no longer relevant.

Learnings

Here are some learnings I gained by adding Siri support to RaceRunner.

0. Siri constitutes a new UI in addition to whatever UI the app already has. This can violate an app’s assumption that only one UI is interacting with the model. If so, adding Siri support entails removing this assumption. Here is an example from RaceRunner.

The app has a screen that shows runs in progress. The screen is implemented in RunVC. There is a button that pauses a run in progress or resumes a paused run. In pseudocode, here was the implementation of this button’s behavior before Siri support:

if RunModel's state is paused

tell RunModel to resume the run

change the button label from "Resume" to "Pause"

else

tell RunModel to pause the run

change the button label from "Pause" to "Resume"

endif

The problem was the assumption that the button’s label should change between Pause and Resume when, and only when, the user taps the pause/resume button. But Siri support introduced another scenario in which the button label should change: when the user pauses or resumes the run via Siri. In my initial Siri implementation, the button’s label was not changing when the user paused or resumed via Siri because there was no tap on the button. The fix was to decouple what happens when the user taps the button (RunModel is paused or resumed) from the changing of the button label. When RunModel changes state to paused or in-progress, RunModel posts a notification about this change to NotificationCenter. RunVC registers for this notification and changes the button label in response to it. I refactored all communication from RunModel to interested parties, namely RunVC and the menu screen, to occur via NotificationCenter.

1. Before I implemented Siri support, I never needed to debug a process other than the one associated with the current scheme. But with extensions, there are two relevant processes: the host of the extension (in my case, Siri) and the main app (in my case, RaceRunner). When running an extension scheme, the developer chooses the host app to run. In my case, this was Siri. As I developed the extension, I was able to use breakpoints in the extension code because it was running in the Siri process. But I could not initially use breakpoints in RaceRunner when I was running the extension scheme because RaceRunner was in another process. Turns out, Xcode can debug any process. By clicking Debug -> Attach to Process -> RaceRunner, I enabled debugging of the RaceRunner process. This enabled me to debug new code in RaceRunner that runs in response to a Siri request.

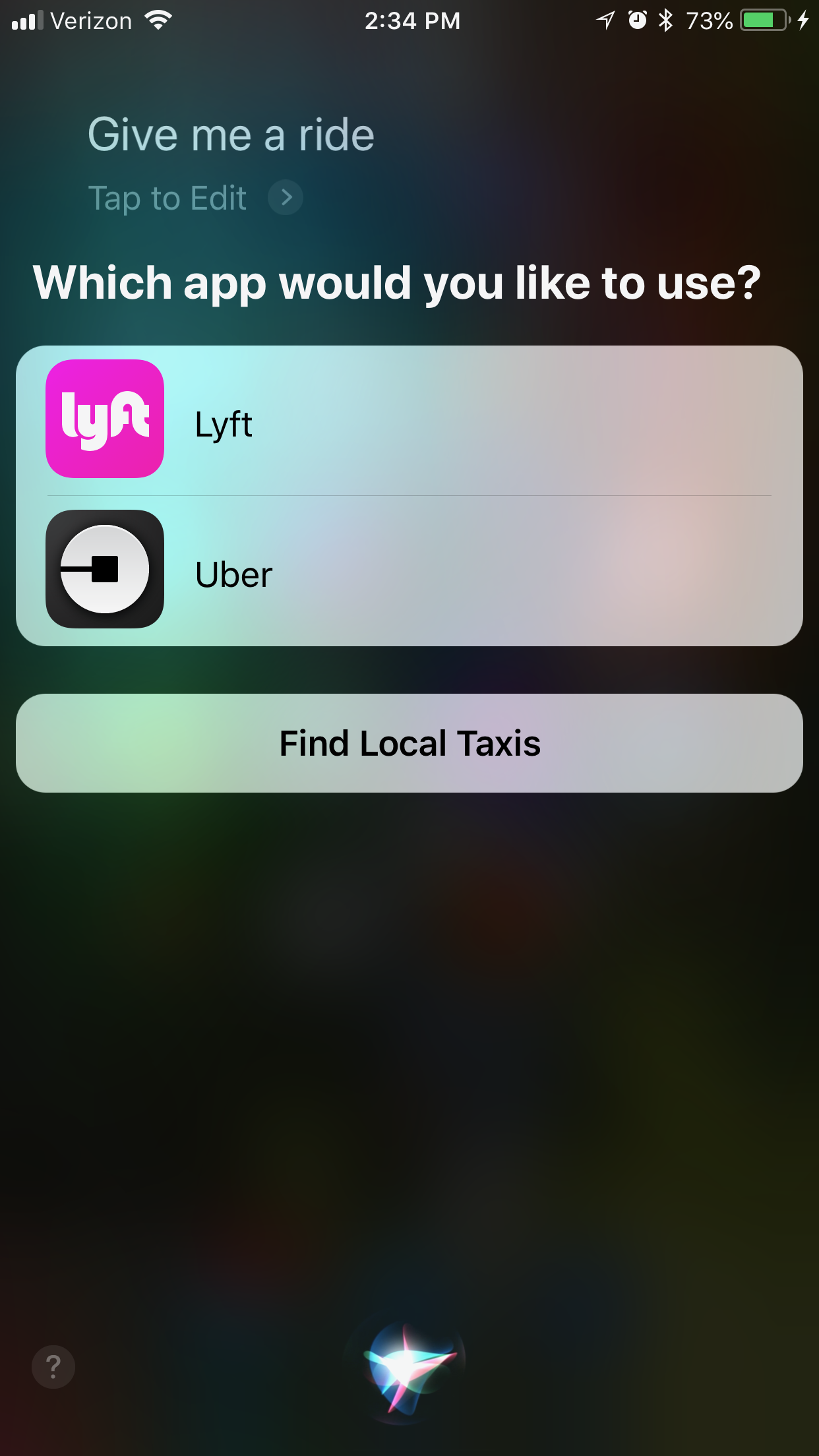

2. Siri does not allow the user to specify default apps for intent domains. Instead, when more than one app that can handle a request is present, Siri presents a menu and asks the user to select an app. Here is an example for ride booking:

Generic Ride Requested via Siri

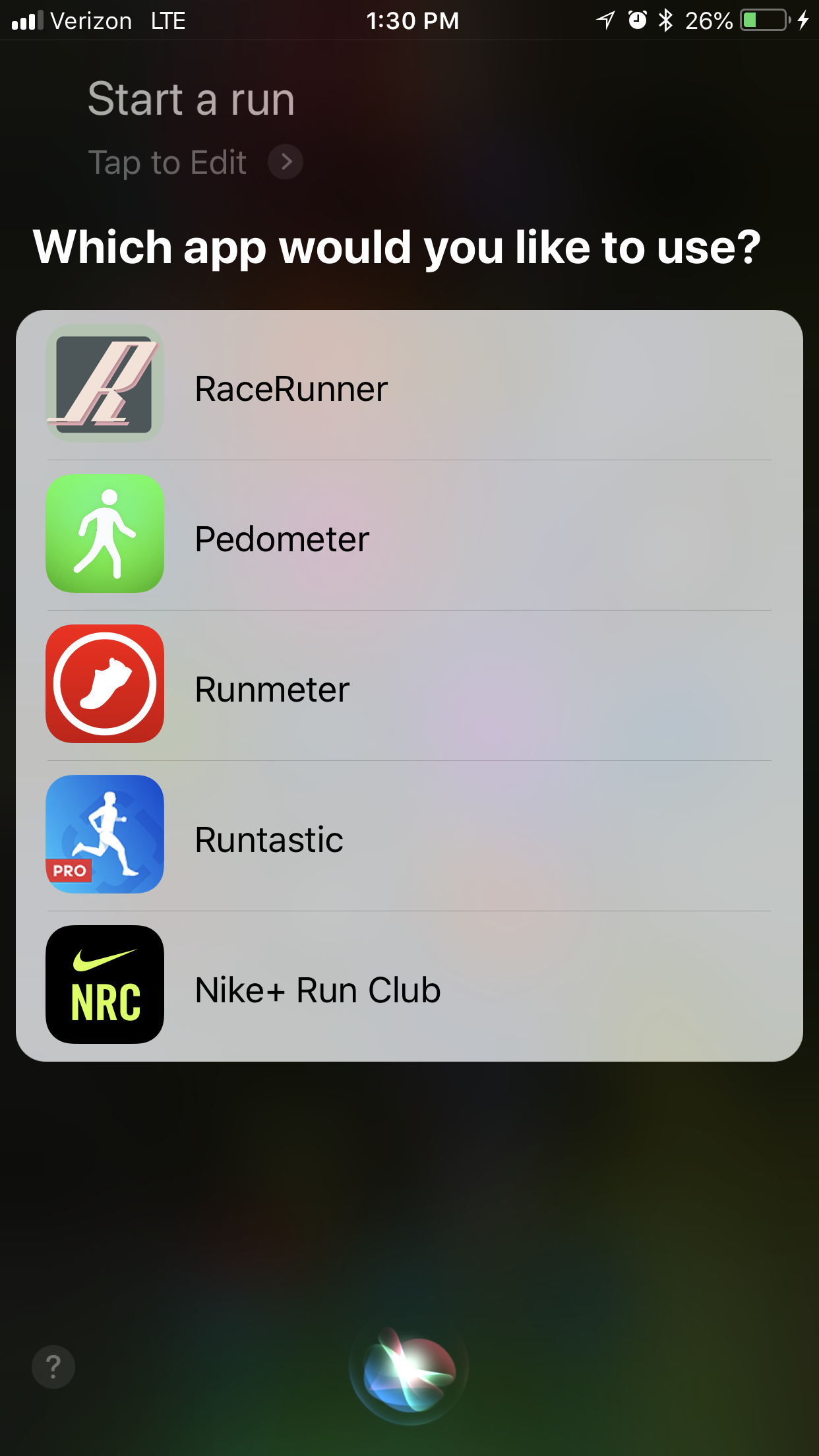

This limitation is unsurprising given iOS’s inability to allow users to set default apps for, say, meatspace navigation or web browsing. But it’s a problem for RaceRunner. Saying “start a run” to Siri causes the following screen to appear:

Generic Workout Initiated via Siri

To bypass this menu of workout apps, the user must say something like “start a run in RaceRunner”. The incantation “in RaceRunner” is particularly annoying for me because I have no interest in launching Pedometer++, Runmeter, Runtastic, or Nike+ Run Club via Siri. I have those apps installed for occasional reports of my physical activity (Pedometer++) or market research (the rest).

In light of the longstanding lack of support in iOS for setting default apps, as well as the business reasons for that lack, I hold out no hope for this feature being implemented for Siri. But Siri shortcuts, announced at WWDC 2018, assuage this pessimism. If I understand the relevant sessions correctly, shortcuts with trigger phrases like “start”, “pause”, “resume”, and “stop” are possible.

Closing Thoughts

The clunkiness of phrases like “start a run in RaceRunner” means that usability is not quite where I would like it to be. But I am pleased to have implemented Siri support for three reasons. First, I hope to get as many runners as possible using RaceRunner, and for some, Siri support is likely a must-have. Second, by forcing me to remove the assumption that only one UI is interacting with RunModel, implementing Siri support resulted in a cleaner architecture. Third, implementing Siri support was an important step towards implementing Siri shortcuts in a future release of RaceRunner, and I do believe that Siri shortcuts will be a game-changer in terms of easily starting, pausing, resuming, and stopping runs.